Intro

The paper has been published in Springer notes which can be found here the pre-print is available here. This page will cover the basics of the system to allow you to integrate into your own projects. I would recommend watching the youtube video, which is a more “entertaining” version of the presentation I did at HCI International 2023.

Key Features

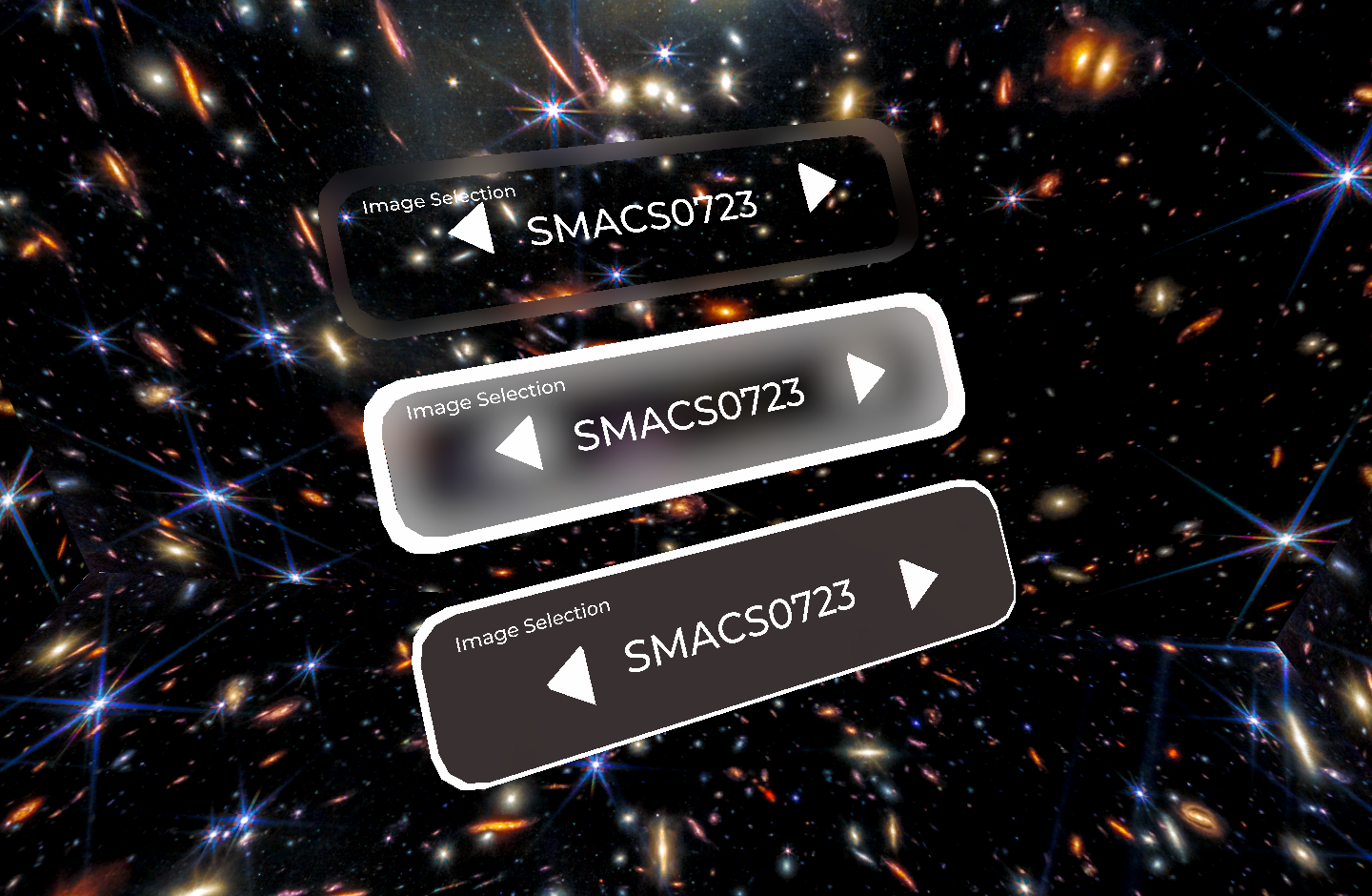

Existing interfaces are not, if ever, designed with accessibility in mind. It’s a difficult thing for developers to implement as its not clear on exactly what makes a design accessible. This interface has been developed with an accessible first design philosophy, aiming to remove this responsibility from the developer allowing for a multimodal input approach allowing users to select their preferred input mechanism whilst also providing support for relocating the positions of UI elements.

-

Free-floating allows for adjustment in 6-DOF for users with specific viewing angle requirements and allows for anchored placement in 3D space.

-

Contextual, allowing elements to only be displayed when relevant to the location in the environment resulting in;

-

Predictable task-focused interactions, using skeuomorphs and colors around 3D interface trims in designated context zones

Demo Download

The Github repo you can download various demonstrations of the application. You can integrate this into your project by creating a panel with a border that allows for grabbable interactions. The

Point cloud, video, photogrammetry and GIS dataset visualisation with custom placement for UI

Panels with borders which act as buffer zones to prevent interacting with UI componenets attached

The panels are kinematic and work with the physics engine allowing for static placement but also interact with the envionment

Further Reading

Check out the following links for inspiration and further reading about this topic